Mistral AI Introduces Workflows for Orchestrating Enterprise AI Processes

Your AI models are capable. Your production deployments are not. Mistral's Workflows addresses the infrastructure gap that kills every enterprise AI pilot — built on the same durable execution engine trusted by Netflix, Stripe, and Salesforce.

Mistral AI Introduces Workflows for Orchestrating Enterprise AI Processes

Your AI models are capable. Your production deployments are not. Mistral's Workflows addresses the infrastructure gap that kills every enterprise AI pilot — built on the same durable execution engine trusted by Netflix, Stripe, and Salesforce.

⚡ 01 — Introduction: The Production Gap

Every enterprise AI deployment story follows a familiar arc. A promising proof of concept built in a notebook. A demo that impresses leadership. Then — nothing. The pipeline that ran flawlessly on a laptop fails silently in production with no trace. A long-running document extraction job times out mid-way through. A contract approval workflow needs a human sign-off but has no mechanism to pause and wait.

This is the gap Mistral AI's Workflows is designed to close. Launched in public preview on April 28, 2026, Workflows is an orchestration layer for enterprise AI processes — not a new model, not a chatbot wrapper, but the infrastructure layer that makes AI-powered automation actually work in the messy reality of enterprise systems.

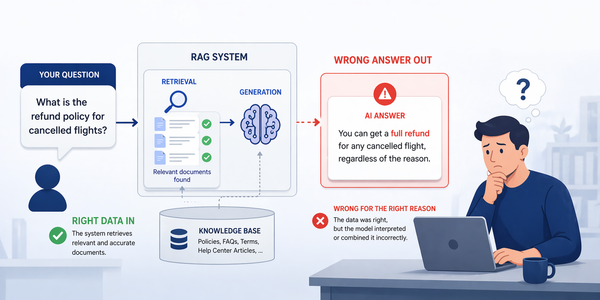

Enterprise teams today have access to capable models. What they lack is a way to run them reliably in production. The failure modes are consistent across every industry: pipelines that run in a notebook but fail silently in production with no trace, long-running processes that cannot survive a network timeout, multi-step operations that need human approval mid-execution but have no mechanism to pause and resume, and systems that offer no way to verify they are still doing what they are supposed to after deployment. Building all of the infrastructure to address these challenges from scratch is months of complex work — Workflows packages it as a managed layer.

📈 02 — Market Context & Why This Matters Now

The timing of this launch is deliberate. The agentic AI market has been valued at $10.9 billion in 2026 and is projected to reach $199 billion by 2034. Yet industry research points to a stark reality: over 40% of agentic AI projects will be aborted by 2027 due to high costs, unclear value, and complexity. Mistral is betting that Workflows can help its enterprise customers avoid becoming one of those statistics.

Workflows is the critical middle layer of Mistral's vertically integrated three-part enterprise platform assembled throughout 2026. At the bottom sits Forge — the custom model training platform launched at NVIDIA GTC in March. At the top sits Vibe — the coding agent interface. Workflows is the orchestration piece: how you blend deterministic business rules with agentic AI capabilities and put models to do valuable work.

🏗️ 03 — Architecture: Two Planes, One Platform

Workflows introduces a deliberately split architecture that addresses the core tension in enterprise AI: centralized orchestration versus data sovereignty. The key design decision: separate the control plane from the data plane entirely, with execution workers running inside the customer's own infrastructure.

Mistral hosts the orchestration infrastructure — the Temporal cluster, the Workflows REST API, and the Studio UI. Customers deploy workers on their own Kubernetes environment using a separate Helm chart, and those workers connect back to the central cluster via secure outbound credentials. The orchestrator never initiates connections into the customer's network.

🔧 04 — The Temporal Engine: Durable Execution Explained

At the heart of Workflows is Temporal, the open-source durable execution engine that powers orchestration at Netflix, Stripe, and Salesforce. Mistral extended it with AI-specific capabilities: streaming for LLM token output, larger payload handling, multi-tenancy for enterprise workspaces, and fine-grained observability not provided out of the box.

"Crashes don't lose work. Every step is recorded in an event history. When a process dies, another resumes from the last completed step — not from zero." — Mistral AI Workflows documentation

In conventional application code, a crash, timeout, or network error leaves a multi-step process half-finished. Teams then write custom state management, retry logic, and recovery code — essentially building a durable execution engine from scratch. Temporal eliminates this. Every important action is persisted in an append-only event log before the next step executes. A new worker replays that log and resumes exactly where the previous worker stopped.

What "Durable Execution" Actually Means for AI Workloads

For AI workflows specifically, durable execution solves several acute problems. A document review process that calls an LLM 40 times should not restart from page 1 because an API timed out on page 37. A multi-agent pipeline coordinating between a research agent, a writing agent, and an approval agent should not lose its shared state on a worker restart. Workflows run from seconds to months — with any failure resuming from the last committed checkpoint.

🗂️ 05 — Core Features Deep Dive

Durable Execution

Every step recorded in an append-only event log. Workers resume from the last checkpoint after any failure — crash, timeout, or worker restart. Built on Temporal's proven engine.

Human-in-the-Loop

A single decorator pauses execution for human approval. Workflows wait hours or days — no compute burned, no state lost, no connection held open. Resume when input arrives.

Observable by Default

OpenTelemetry traces with zero extra wiring. Live event streams, queryable execution history, and detailed timelines in Studio. Every decision, retry, and state change is logged.

AI Primitives Included

Native agent loop support, LLM token streaming to clients, and direct Mistral API integration — without writing the integration code yourself. AI is a first-class citizen.

Python-Native, Code-First

Developers write workflows as Python code — not drag-and-drop diagrams. Git-versionable, testable, code-reviewable. Business logic is the only thing you write; infrastructure is handled.

SDK-Layer Encryption

Payloads encrypted before they leave your worker. Mistral's platform stores only ciphertext. Keys remain under your control. Data never leaves your perimeter in plaintext.

| Feature | Description | Key Benefit | Status |

|---|---|---|---|

| Stateful execution | Event-sourced state machine — processes resume via log replay | Eliminates restart-from-zero failures permanently | GA |

| Pause & resume | Single decorator suspends workflow awaiting external signal | Human approval without burning compute or holding connections | GA |

| Retry policies | Configurable backoff, max attempts, error type filtering | Production resilience without custom retry code | GA |

| OpenTelemetry tracing | Distributed traces without extra instrumentation | Zero-config observability across every workflow step | GA |

| RBAC + Workspaces | Role-based access control with team/project isolation in Studio | Governance controls required for regulated industries | GA |

| LLM token streaming | Stream tokens to clients mid-workflow execution | Responsive UX for long-running AI tasks | Preview |

| SDK-layer encryption | Payload encrypted before leaving worker; platform holds ciphertext | Data never leaves your perimeter in plaintext | Preview |

| Multi-agent orchestration | Coordinate multiple specialized agents with hand-offs and shared state | Complex agentic pipelines at production scale | Roadmap |

💻 06 — The Python SDK: What Developers Actually Write

The Mistral Python SDK v3.0 handles all the complexity. Retry policies, tracing, timeouts, rate limiting, and human-in-the-loop are configured via decorators and single-line options. The only thing a developer writes is the business logic itself.

# Install: pip install mistralai-workflows from mistralai.workflows import workflow, activity, human_approval from mistralai.workflows import RetryPolicy, timeout # ── Workflow definition — the only thing you write is business logic ── # The SDK handles: retry, tracing, timeouts, rate limiting, durability @workflow( retry=RetryPolicy(max_attempts=3, backoff_coefficient=2.0), timeout=timeout(hours=24), ) async def contract_review_pipeline(contract_id: str) -> dict: # Step 1: Extract structured data from PDF # If this crashes, the next worker replays from here on restart extracted = await extract_contract_data(contract_id) # Step 2: Run compliance checks via AI agent compliance = await run_compliance_agent(extracted) # Step 3: Pause for human approval if risk score is high # Workflow suspends here — no compute burned while waiting # Business users act via Le Chat; the workflow resumes with full state if compliance.risk_score > 0.7: approved = await human_approval( message=f"High-risk contract {contract_id} needs legal review", assignee="legal@company.com", timeout=timeout(days=3), # wait up to 3 days ) if not approved: return {"status": "rejected", "reason": "human_review"} # Step 4: Update CRM — idempotent, safe to retry await update_crm(contract_id, status="approved") return {"status": "approved", "risk_score": compliance.risk_score} # ── Deploy workers to your Kubernetes environment ── # helm install mistral-worker mistralai/workflows-worker \ # --set apiKey=$MISTRAL_WORKFLOWS_KEY \ # --set workflowModule=contract_review_pipeline # ── Publish to Le Chat so anyone in the org can trigger it ── # mistral workflows publish contract_review_pipeline \ # --name "Contract Review" --description "AI-powered contract compliance check"

The human_approval call suspends the entire workflow execution without burning compute or holding a connection open. When the reviewer acts via Le Chat, the workflow resumes exactly where it paused — with all prior state intact, including the extracted contract data and compliance scores. This is structurally impossible with conventional serverless or queue-based architectures without building a custom state machine from scratch.

⚙️ 07 — Deploying AI Models in Production

Workflows does not replace the ML frameworks engineers already know — it orchestrates them. TensorFlow, PyTorch, or Scikit-learn models can be called as activities within a workflow, with the durability and observability layer wrapping every invocation. The conflict-aware pipeline pattern applies here too: the workflow handles retries, state management, and the audit log so each model call activity remains simple and focused.

📡 08 — Durability, Observability & Fault Tolerance

The practical impact of durable execution becomes clear in failure scenarios. Traditional enterprise AI projects rarely fail spectacularly — they fail slowly and expensively: a task times out during a customer handoff, a branch resumes from the wrong state, a document-processing step loses context, or an internal reviewer cannot find which action triggered a compliance exception.

- ✗Worker crash at step 7 of 12: restart from step 1, re-run all LLM calls, re-incur all API costs

- ✗Network timeout on a 3-hour document batch: entire job lost with no partial credit

- ✗Human approval needed mid-process: send an email, wait, manually re-invoke the pipeline

- ✗Compliance audit requests: no trace of which model call produced which output or why

- ✗Silent failure: pipeline completes with wrong output, no error raised, no alert fired

- ✓Worker crash at step 7: new worker replays event log and resumes at step 7, steps 1–6 skipped

- ✓Network timeout: workflow pauses at the last committed checkpoint, resumes when connectivity restores

- ✓Human approval: single decorator pauses execution — reviewer acts in Le Chat, workflow resumes

- ✓Compliance audit: complete immutable event log of every decision, retry, and state transition

- ✓Observable alerts: OpenTelemetry traces flag anomalies before they reach end users

Treat workflow_failure_recovery_rate as a first-class SLO alongside latency and throughput. A high recovery rate (workflows resuming from checkpoint vs. restarting from scratch) demonstrates the durability layer is working. A declining recovery rate is an early indicator of event log or worker configuration problems — visible before they manifest as end-user failures or data loss.

🛡️ 09 — Security, Governance & Data Sovereignty

For regulated industries — finance, healthcare, logistics, and government — the security model is often the deciding factor. Workflows addresses this at the architecture level, not as a feature checklist appended after the fact.

🏆 10 — Competitive Landscape

AI orchestration platforms are rapidly becoming the backbone of enterprise AI systems in 2026. As businesses deploy multiple AI agents, tools, and LLMs, the need for unified control, oversight, and efficiency has never been greater. Mistral's differentiation rests on three explicit pillars: vertical integration with its own model stack, European data sovereignty positioning, and a code-first philosophy that aligns with engineering teams over low-code tooling.

Vertical Integration + Data Sovereignty

Temporal-backed durable execution. Python-native, code-first. Vertically integrated with Forge (model training) and Vibe (coding agent). Full data sovereignty with workers in your VPC and EU-regulated cloud options.

Deep AWS Ecosystem + Multi-Provider

Deep AWS ecosystem integration across S3, Lambda, SageMaker. Multi-model and multi-provider support. Strong observability via CloudWatch. Broad connector library for AWS-native enterprises.

Microsoft / OpenAI Integration + Enterprise SSO

Tight Microsoft and OpenAI integration. Copilot Studio for business users. Strong enterprise SSO and compliance stack. Azure AI Foundry packaging orchestration, observability, and workflow controls as production infrastructure.

Maximum Flexibility + Large Ecosystem

Maximum architectural flexibility and control. Large developer community and ecosystem. Over 100 native document connectors in LlamaIndex. No built-in durability guarantees or managed human-in-the-loop capabilities.

Prashanth Velidandi, commenting on the launch: "Finally getting a proper orchestration layer, but in practice, the issues still show up one level below. The hard part in enterprise orchestration is not chaining agents — it's deciding what happens when an agent is half-right." This captures the real challenge that Workflows must still address: rollback semantics, partial-completion handling, and auditability of probabilistic AI decisions in regulated workflows. Workflows addresses the infrastructure layer; the semantic validation layer is still the team's responsibility.

🏢 11 — Early Customers & Real-World Use Cases

Mistral reports millions of daily workflow executions at launch, with six named enterprise customers already running Workflows in production across logistics, banking, energy, semiconductors, and government sectors.

| Customer | Sector | Use Case | Why Workflows |

|---|---|---|---|

| ASML | Semiconductor equipment | Document processing and compliance automation for chip manufacturing specifications | Audit trail required for semiconductor compliance; human approval on spec changes |

| ABANCA | Financial services (Spain) | Regulated financial workflows with multi-step approval gates and full audit trails | EU banking regulation requires immutable audit logs and human sign-off on decisions |

| CMA-CGM | Global shipping / logistics | Freight release approvals and logistics document automation at container scale | Long-running cross-timezone approval chains; single HITL decorator for freight gates |

| France Travail | Public sector (France) | Citizen-facing process automation with strict French GDPR and sovereignty requirements | Data residency mandates; Mistral's EU-native infrastructure and French cloud option |

| La Banque Postale | Postal banking (France) | Regulated multi-step financial processes with mandatory human-in-the-loop steps | French banking regulation; vertical stack means no cross-vendor data leakage risk |

| Moeve | Energy sector | Operational process automation for critical infrastructure workflows | Durability requirements for critical infrastructure; no single point of failure in execution |

⚠️ 12 — Common Anti-Patterns to Avoid

🔭 13 — Roadmap & Conclusion

Build on the Infrastructure Layer — Not Around It

Mistral Workflows marks a meaningful shift in what enterprise AI infrastructure can look like. Its biggest promise is not that it makes models smarter — it is that AI-powered processes can become durable, observable, correctable, and auditable inside real enterprise systems.

In 2026, enterprises do not just need better models. They need the orchestration layer that connects models to real work without losing control. The technology is sound. The Temporal foundation is proven at Netflix and Stripe scale. The architecture addresses the right problems at the right layer.

The evaluation question is not whether durable AI orchestration is valuable — it clearly is. The question is whether Mistral can hold this position against hyperscaler bundling and open-source alternatives. That depends on execution: proving that millions of daily executions represent genuine production scale, and that the hard parts — rollback semantics and auditability of probabilistic AI decisions — are as solved as the launch messaging suggests.

Start with the Workflows docs — not a model upgrade →