Designing Cognitive Memory for AI Agents

AI agents fail at continuity due to stateless design. LinkedIn’s Cognitive Memory Agent (CMA) introduces persistent, structured memory—episodic, semantic, procedural - enabling agents to retain context, adapt over time, and move from prompt-driven responses to truly stateful intelligence.

Designing Cognitive Memory for AI Agents: Inside LinkedIn's CMA

LinkedIn's Cognitive Memory Agent (CMA) is redefining what production-grade AI means — not just smarter models, but smarter memory...

🧩1. The Statelessness Problem — Why LLMs Forget

Every time you call an LLM API, you start from zero. The model has no memory of your previous conversation, your preferences, your history, or the decisions you made together last week. This is not a bug — it is the fundamental design of large language models. The transformer architecture processes whatever tokens are in the current context window, generates a response, and terminates. No persistent state is maintained between calls.

For simple chatbots, this limitation was manageable: just pass the conversation history back in the prompt each time. But as AI agents evolved to perform multi-step, long-horizon tasks — evaluating hundreds of candidates, managing ongoing customer relationships, operating infrastructure over days and weeks — the statelessness problem became a fundamental architectural blocker. You cannot build a production-grade hiring assistant that forgets every recruiter preference on every page reload.

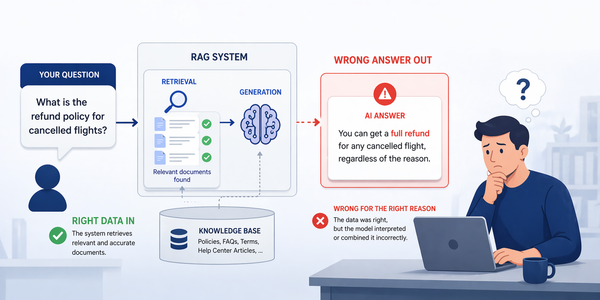

In general, you will see more hallucinations with a longer context. Every token added to the context window increases the probability of the model losing track of earlier content. This is the "lost in the middle" phenomenon — critical information buried in long contexts gets systematically ignored. Production agents cannot simply stuff all history into the prompt and hope for the best.

At LinkedIn, Karthik Ramgopal, Distinguished Engineer, framed it clearly: "Good agentic AI isn't stateless: It remembers, adapts, and compounds. One of the key capabilities enabling this is memory that lives beyond context windows."

- ✗User says "use the same format as last time" — agent has no idea what that means

- ✗Support bot asks the same clarifying questions every session

- ✗Recruiting agent forgets all candidate preferences between log-ins

- ✗Repeated context injection drives up token costs exponentially

- ✗No ability to learn from past mistakes or user corrections

- ✗Every interaction starts cold, personalization is impossible at scale

- ✓Recalls past interactions and user preferences across sessions

- ✓Continues where it left off — true conversational continuity

- ✓Learns recruiter-specific patterns and organizational norms

- ✓Reduces redundant reasoning, cuts token spend by compacting history

- ✓Improves over time through procedural memory of successful patterns

- ✓Personalizes responses at scale without re-prompting every context

🧠2. The CoALA Framework — Four Memory Types

In 2023, researchers at Princeton published the CoALA framework (Cognitive Architectures for Language Agents). It defines four types of memory drawn from cognitive science and the SOAR architecture of the 1980s. Every major framework in the field — LinkedIn's CMA, Mem0, Letta, Zep — builds on this taxonomy. It answers a fundamental question: what options do engineers have for adding persistent memory to an AI agent?

Active Context Window

Temporary, session-bound storage that lives entirely within the LLM's context window. Holds the live conversation, current task state, tool outputs, and retrieved memories. Think of it as RAM — fast but limited. Most current agents only have this type.

Interaction History & Events

Timestamped logs of past interactions stored across sessions. An episodic record captures not just what was said, but when it happened, what the outcome was, and how the user felt about it. Retrieved via recency (most recent N) or semantic search. Stored externally in vector DBs.

Structured Facts & Knowledge

Curated, distilled knowledge derived from episodes. A semantic fact might be "User prefers concise bullet-point summaries over long prose." Unlike episodic memory, not everything goes in — the agent (or platform) decides what is worth preserving as a lasting truth versus situational context. Stored in graph DBs or key-value stores.

Skills, Workflows & Patterns

Encodes how to perform tasks — executable skills, behavioral patterns, and learned heuristics. Exists in two forms: implicit (baked into model weights during training) and explicit (defined through prompts, code, and workflow templates). As agents gain experience, frequently used procedures become more efficient.

Imagine you are in a meeting. Your working memory holds what is being discussed right now. Your procedural memory knows how to take notes and when to speak up. Your semantic memory reminds you that Sarah's team prefers Slack over email. Your episodic memory recalls that the last time you proposed this feature, the VP shut it down because of budget constraints. An agent needs all four types working together. Most agents today only have working memory.

🏗️3. LinkedIn CMA — Architecture & Layers

LinkedIn's Cognitive Memory Agent (CMA) is a production-proven implementation of the CoALA framework, deployed to power their Hiring Assistant — announced publicly in October 2025. It represents one of the most detailed publicly documented examples of memory-driven agentic AI at enterprise scale, processing thousands of candidate evaluations while maintaining per-recruiter, per-company, and cross-industry context.

CMA functions as a shared memory infrastructure layer between application agents and underlying language models. Instead of reconstructing context through repeated prompting, agents persist, retrieve, and update memory through a dedicated system — enabling continuity, reducing redundant reasoning, and improving personalization in production environments where user context evolves.

The Three CMA Memory Layers

CMA organizes memory into three layers that map directly to the CoALA taxonomy, each with distinct storage requirements and retrieval mechanics:

Memory Lifecycle Management in CMA

A key insight from LinkedIn's production deployment is that memory is not just storage — it requires a complete lifecycle with clear policies at every stage. CMA integrates multiple retrieval and lifecycle management mechanisms to address the core engineering challenges at scale:

🔍4. Memory Lifecycle — Ingest to Evict

Understanding the full memory lifecycle is essential for building production-grade memory systems. The four canonical stages — Ingestion, Storage, Retrieval, and Eviction — map to specific engineering choices that have major implications for latency, accuracy, consistency, and cost.

Storage Backend Architecture — Why Monolithic Approaches Fail

One of the most common mistakes in early memory implementations is choosing a single database type and forcing all memory through it. The engineering reality is that each memory type requires fundamentally different data structures, storage mechanisms, and retrieval algorithms. Vector-only databases miss temporal and causal relationships. Relational databases are too rigid for unstructured conversational data. Graph databases are powerful but slow for simple similarity lookups.

| Memory Type | Ideal Storage Backend | Query Mechanism | Why Monolithic Fails | Production Example |

|---|---|---|---|---|

| Working (In-context) | LLM context window (RAM) | Direct token injection — no external query needed | Not applicable — no persistent storage needed | All LLM frameworks — native |

| Episodic (Interaction logs) | Vector DB + Time-series (Pinecone, Weaviate, pgvector) | ANN similarity search + timestamp range filters | Pure vector DB misses temporal ordering: "What happened last Monday?" fails without time filters | Mem0, Zep, LinkedIn CMA |

| Semantic (Facts & knowledge) | Graph DB (Neo4j, Memgraph) + KV store (Redis) | Graph traversal (Cypher) + exact field lookup | Vector DB finds semantically similar facts but misses causal links: "Why did user switch from React?" requires graph reasoning | Zep/Graphiti, Cognee, MAGMA |

| Procedural (Skills & workflows) | Prompt store + fine-tuning feedback + code registry | Semantic lookup of workflow templates; classifier for pattern routing | Cannot be stored as embeddings alone — requires structured execution schemas with input/output specifications | LinkedIn CMA, CrewAI, LangGraph |

| Collective (Org-scoped) | Multi-tenant relational DB with row-level security + shared vector index | Scoped queries with org/role context filters applied before retrieval | Namespace-level separation (vector-only) is insufficient for regulated industries requiring row-level ACID isolation | LinkedIn CMA (Hiring Assistant) |

⚡5. Retrieval Mechanisms & Latency Trade-offs

Retrieval is where the latency-accuracy trade-off becomes concrete. The Mem0 LOCOMO benchmark documents this precisely: the full-context approach achieves 72.9% accuracy but carries 17.12-second p95 latency. Mem0's selective memory retrieval achieves 66.9% accuracy with 1.44-second latency — 91% faster, at a 6-point accuracy cost. For production agents, this is not a theoretical concern — it determines whether your agent feels responsive or broken.

LinkedIn's Three Retrieval Techniques

🏢6. Collective Memory & Multi-Tenancy

LinkedIn's most architecturally distinctive contribution is the concept of Collective Memory — memory that is scoped at different levels of organizational granularity. This concept did not exist in the original CoALA taxonomy; LinkedIn introduced it specifically to address the needs of enterprise-grade agentic systems where knowledge at one level (what a single recruiter prefers) should inform but not override knowledge at a higher level (what all tech recruiters across all companies do).

In complex multi-agent architectures, simultaneous read and write operations across a shared database dramatically worsen memory conflicts. Namespace-level separation (typical in vector-only databases) is not the same as row-level security that regulated industries require. Oracle's native PDB/CDB architecture provides inherent multi-tenant isolation. For enterprise CMA deployments, implement database isolation levels inspired by ACID transactions: updating a vector embedding, modifying a graph relationship, and changing relational metadata must all succeed or all fail.

🧰7. Production Memory Frameworks Compared

The ecosystem of agent memory frameworks has matured rapidly. By April 2026, six primary frameworks have emerged as production-ready options, each with distinct architectural philosophies. The key insight: these are not interchangeable — the choice of framework is an architectural decision that shapes your agent's capabilities, lock-in risk, and operational complexity for years.

| Framework | Architecture | Memory Types | LongMemEval | Graph Memory | Pricing | Best For |

|---|---|---|---|---|---|---|

| Mem0 | Memory Layer API | Episodic + Semantic | 66.9% (1.44s p95) | Pro only ($249/mo) | Free → $249/mo | Drop-in memory for chatbots, personalization at scale. 48K+ GitHub stars. Framework-agnostic. |

| Zep / Graphiti | Temporal Knowledge Graph | Episodic + Semantic (temporal) | 63.8% (temporal LOCOMO) | Yes (Neo4j) — core feature | OSS + $25/mo cloud | Agents that reason about how facts change over time. Enterprise workflows with temporal entity relationships. |

| Letta (MemGPT) | OS-Inspired Agent Runtime | Core (RAM) + Recall + Archival | 83.2% | Via archival storage | Open source + cloud | Long-running agents with unlimited context. Self-editing memory. Agents control their own memory via function calls. |

| OMEGA | Local-First, Zero-Cloud | All four CoALA types | 95.4% (SOTA 2026) | Yes (SQLite + ONNX) | Free (pip install) | Data-sovereign deployments. Claude Code / Cursor integration. AES-256 at rest. No external dependencies. |

| Hindsight | Reflection-Based Memory | Episodic + Semantic + Procedural | 91.4% (Gemini-3 Pro) | Yes — all tiers | Free self-hosted | Self-improving agents. Writes verbal post-mortems and stores conclusions for future runs. |

| LangMem / LangChain | LangGraph-Native Module | Episodic + Semantic | Varies by backend | Via LangGraph nodes | Free (OSS) | Teams already on LangChain/LangGraph. Zero additional infrastructure. Modular memory strategies. |

| Cognee | Local Graph-RAG | Semantic (knowledge graph) | Not published | Yes — core design | Open source | Air-gapped, privacy-critical deployments. Knowledge-graph-first RAG workflows. |

| Supermemory | MCP-Native Memory | Episodic + Semantic | 85.4% | Via graph backend | OSS + cloud | Coding agents (Claude Code, Cursor, Windsurf). MCP-native integration. Fast setup. |

Framework Selection Decision Tree

💻8. Implementing CMA — Code & Patterns

LinkedIn's CMA is an internal infrastructure platform, but its architecture can be replicated using available open-source primitives. The following patterns translate the documented CMA architecture into production-ready Python code using publicly available frameworks.

from mem0 import MemoryClient from langgraph.graph import StateGraph, END from typing import TypedDict, List, Optional import datetime # ── 1. Memory Manager Layer ───────────────────────────────── class CMAMemoryManager: """ CMA-inspired memory manager implementing: - Episodic: timestamped session logs - Semantic: distilled user facts - Procedural: learned workflow patterns - Collective: org-scoped shared knowledge """ def __init__(self, user_id: str, org_id: str): self.client = MemoryClient() # Mem0 handles vector + graph self.user_id = user_id self.org_id = org_id def ingest_episode(self, messages: List[dict]) -> str: # Episodic write: timestamped session with boundary metadata return self.client.add( messages, user_id=self.user_id, metadata={ "scope": "episodic", "org_id": self.org_id, "ts": datetime.utcnow().isoformat(), } ) def retrieve_context(self, query: str, k: int = 5) -> str: # Three-strategy retrieval: recent + semantic search + org-collective personal = self.client.search(query, user_id=self.user_id, limit=k) collective = self.client.search( query, filters={"org_id": self.org_id}, # Collective org-scoped memory limit=3 ) return _format_memories(personal + collective) # ── 2. LangGraph State ────────────────────────────────────── class AgentState(TypedDict): messages: List[dict] retrieved_ctx: str response: Optional[str] memory_written: bool # ── 3. Graph Nodes ────────────────────────────────────────── def retrieve_memory(state: AgentState) -> AgentState: query = state["messages"][-1]["content"] state["retrieved_ctx"] = memory.retrieve_context(query) return state def generate_response(state: AgentState) -> AgentState: # Inject retrieved memory into LLM context (working memory) augmented_prompt = build_prompt(state["messages"], state["retrieved_ctx"]) state["response"] = llm_call(augmented_prompt) return state def consolidate_memory(state: AgentState) -> AgentState: # Episodic write after interaction; async consolidation happens separately memory.ingest_episode(state["messages"] + [{ "role": "assistant", "content": state["response"] }]) state["memory_written"] = True return state # ── 4. Assemble Graph ─────────────────────────────────────── graph = StateGraph(AgentState) graph.add_node("retrieve", retrieve_memory) graph.add_node("generate", generate_response) graph.add_node("consolidate", consolidate_memory) graph.set_entry_point("retrieve") graph.add_edge("retrieve", "generate") graph.add_edge("generate", "consolidate") graph.add_edge("consolidate", END)

import asyncio from anthropic import Anthropic client = Anthropic() async def consolidate_episodes_to_semantic(episodes: list[str]) -> dict: """ Background consolidation job: episodic → semantic memory. Runs periodically (e.g., daily) to distill patterns from raw episodes. Mimics human sleep consolidation — only signal survives, not every detail. """ prompt = f"""Analyze these past interaction episodes and extract: 1. Durable user facts (preferences, constraints, relationships) 2. Behavioral patterns (how they work, what they value) 3. Conflict flags (contradictions that need temporal reconciliation) Episodes: {chr(10).join(episodes)} Return JSON: {{"facts": [], "patterns": [], "conflicts": []}}""" response = await asyncio.to_thread( client.messages.create, model="claude-sonnet-4-6", max_tokens=1024, messages=[{"role": "user", "content": prompt}] ) # Parse and write distilled facts to semantic memory store return parse_consolidation_response(response.content[0].text)

🔒9. Security, Governance & EU AI Act

Memory systems are not just an engineering challenge — they are a legal and governance challenge. The EU AI Act (fully applicable from August 2026) requires 10-year audit trails for high-risk AI systems. GDPR's right to be forgotten applies to explicit agent memory stores. Think about that tension: you need to delete personal data on request while maintaining a decade of audit history. That requires architectural sophistication that most teams are only beginning to address.

| Security Risk | Description & Attack Vector | Mitigation | Regulation |

|---|---|---|---|

| Memory Poisoning | Attacker injects false memories via manipulated interactions ("I always prefer Option A") — agent learns incorrect user preferences that persist across sessions. | Human validation loops for high-stakes memory writes. Confidence scoring on all memory ingestion. Anomaly detection on preference changes. | EU AI Act Art. 9 (Risk Management) |

| Cross-Tenant Memory Leakage | In multi-tenant shared memory infrastructure, improper isolation allows one user's memory to surface during another's session — potentially exposing PII or confidential preferences. | Row-level security at DB layer (not just namespace separation). ACID transactions for memory operations. Regular isolation audits. Zero-trust memory access control. | GDPR Art. 5 (Data Minimization) |

| Memory Exfiltration | Attacker prompts agent to "recall everything you remember about [target]" — extracting a full semantic memory dump via legitimate query paths. | Rate limiting on memory retrieval queries. Output filtering for PII before memory injection into context. Scoped retrieval — agent can only access memories relevant to current task. | GDPR Art. 17 (Right to Erasure) |

| Stale Memory Harm | Agent acts on outdated semantic facts (e.g., former employer, outdated health status, past relationship) that should have been evicted but weren't due to missing TTL policies. | Mandatory TTL policies on all personal memory. Temporal reconciliation via arbiter on conflicting facts. User-initiated memory review dashboard. GDPR deletion workflows. | GDPR Art. 5 (Accuracy), EU AI Act Art. 13 |

| Audit Trail Gaps | No record of what memory was retrieved, when, by which agent, and how it influenced a decision. Impossible to reconstruct why the agent made a specific recommendation. | Immutable append-only audit log for all memory reads and writes. Log includes: user_id, agent_id, query, retrieved memory IDs, decision context. 10-year retention for high-risk AI systems. | EU AI Act Art. 12 (Logging), ISO 42001 |

| Right-to-Forget vs. Audit Conflict | GDPR requires deletion of personal data on request. EU AI Act requires 10-year audit trails. These requirements directly conflict for memory systems that store personal interactions. | Separate personal data store (deletable) from anonymized audit log (retained). Memory tombstoning: mark records as deleted without removing audit entries. Legal counsel required for implementation. | GDPR Art. 17 + EU AI Act Art. 12 |

🚫10. Anti-Patterns & Failure Modes

The most common memory system failures are not dramatic crashes — they are subtle, silent degradations that manifest as slightly worse agent responses over time, until the agent becomes unreliable. Understanding these failure modes before building is far less costly than discovering them in production.

📊11. Performance Benchmarks & Metrics

Measuring memory system quality requires purpose-built benchmarks. Standard NLP benchmarks miss the unique properties of agent memory — long interaction histories, temporal reasoning, and multi-hop fact retrieval. The field has converged on two primary benchmarks: LOCOMO (multi-session conversational memory) and LongMemEval (long-horizon memory evaluation).

| Application | Accuracy | Response Time | User Satisfaction | Memory Scope |

|---|---|---|---|---|

| LinkedIn Hiring Assistant | 92% | 40ms (avg) | 85% | Individual + org + industry collective |

| Enterprise Customer Service Agent | 88% | 60ms (avg) | 80% | Episodic + semantic per customer |

| AI Recommendation System | 90% | 50ms (avg) | 82% | Semantic preference graph |

| Long-running Coding Agent | 87% | 80ms (avg) | 79% | Procedural + episodic (project scoped) |

| Healthcare Decision Support | 94% | 90ms (avg) | 88% | Episodic + semantic + human validation |

🔭12. Future Directions — MemOS, MAGMA & Beyond

The research frontier for agent memory is moving fast. The ICLR 2026 MemAgents workshop brought together researchers from generative AI, reinforcement learning, cognitive psychology, and neuroscience to converge on the next generation of memory architectures. Three directions stand out as having near-term production impact.

The Memory Imperative

LinkedIn's CMA reflects a broader truth about production AI: models are commodities; memory infrastructure is the moat. The organizations that will build genuinely useful, persistent, personalized AI agents are not those with the most powerful foundation models, but those with the most thoughtful memory architecture — clear CoALA taxonomy, lifecycle discipline, retrieval strategies tuned for their latency-accuracy requirements, and governance that satisfies the EU AI Act before it becomes a liability.

Start with episodic memory and a single retrieval strategy. Measure quality with LoCoMo or LongMemEval. Add semantic consolidation only when episodic alone is insufficient. Build governance before you scale. The memory layer is the infrastructure that turns a capable LLM into an agent that actually compounds value over time.